How emotion shapes realistic AI photo generation in 2026

Many people believe AI-generated photos lack genuine emotion, producing cold, mechanical images that feel disconnected from human experience. Recent advances in artificial intelligence have shattered this misconception. Modern AI systems now integrate sophisticated emotion models creating nuanced, emotionally resonant images that capture the warmth and connection people seek in couple photos and baby visualizations. These breakthroughs combine psychology, computer vision, and generative modeling to produce images that feel authentic and meaningful. For couples seeking memorable keepsakes or families curious about future children, emotionally intelligent AI photo generation offers unprecedented creative possibilities without expensive studio sessions or complex technical skills.

Table of Contents

- Understanding How AI Captures Emotion In Photos

- Why Emotion Matters For Couple Photos And Future Baby Images

- Challenges And Advances In Nuanced Emotion Generation By AI

- How To Use Emotional AI Photo Generation Tools Effectively

- Discover Personalized AI Photo Keepsakes With PairFuse

- Frequently Asked Questions

Key takeaways

| Point | Details |

|---|---|

| Emotion integration | AI now uses multi-modal models and psychology-based frameworks to embed nuanced emotional qualities directly into generated photos |

| Continuous modeling | Valence-Arousal scales capture subtle emotional states more effectively than simple happy or sad categories |

| Couple and family appeal | Emotionally resonant AI photos create meaningful keepsakes for relationships and future baby visualizations |

| Bias awareness | Some AI systems unintentionally favor negative emotions, requiring careful tool selection and prompt refinement |

| Practical accessibility | Advanced emotion-aware tools enable anyone to create professional-quality, emotionally rich images without studios |

Understanding how AI captures emotion in photos

Artificial intelligence captures emotion in photos through sophisticated multi-modal systems that analyze and generate visual content based on psychological models. CoEmoGen uses MLLMs for emotion triggering and HiLoRA modules modeling emotion-specific semantics in diffusion models, enabling AI to understand which visual elements evoke particular feelings. These systems work by creating detailed captions that describe emotional qualities, then using specialized neural network components to translate those descriptions into pixel-level image features.

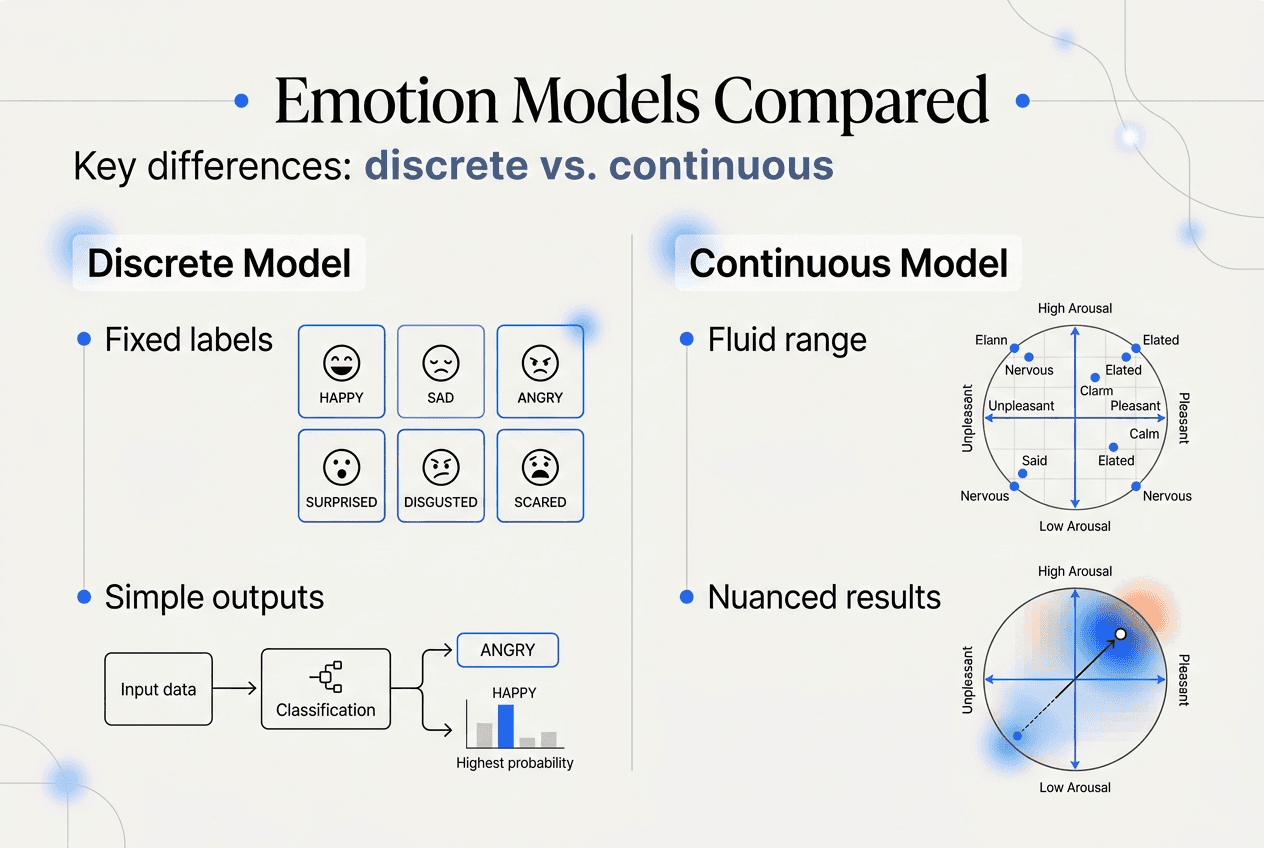

The technical foundation relies on understanding how humans perceive emotion in images. Traditional approaches categorized emotions into discrete buckets like happy, sad, or angry. Modern systems recognize this oversimplifies human experience. EmotiCrafter applies continuous Valence-Arousal emotion models outperforming discrete methods for emotional images, representing feelings along two axes: positivity versus negativity, and excitement versus calmness. This creates a nuanced emotional space where subtle variations matter.

Diffusion models generate images by gradually refining random noise into coherent pictures. When emotion enters the equation, these models receive guidance at each step about the emotional qualities the final image should convey. The AI learns associations between visual patterns and emotional responses, like warm lighting suggesting intimacy or soft focus creating dreaminess. This process happens through emotion embedding, mapping abstract feelings to concrete visual features the model can manipulate.

For couple photos and baby images, this technology creates remarkable results. The AI doesn’t just place two people together, it understands visual cues that communicate connection, tenderness, or joy. Facial expressions, body language, lighting choices, and color palettes all work together to evoke specific emotional responses.

Key emotion modeling techniques:

- Multi-modal language models analyze text descriptions and visual content simultaneously to understand emotional context

- Hierarchical modules separate high-level emotional concepts from low-level visual details for precise control

- Continuous emotion scales allow fine-tuned emotional expression beyond simple categories

- Diffusion guidance integrates emotional objectives throughout the generation process

Pro Tip: When selecting AI photo tools, look for platforms that mention emotion modeling or psychological frameworks in their technical descriptions, as these typically produce more nuanced, authentic results than basic generators.

Understanding these foundations helps you appreciate why some AI photos feel genuinely moving while others seem flat. The difference lies in how deeply emotion is integrated into the generation process. For practical guidance on achieving the best results, explore ai-generated portrait best practices that leverage these technical capabilities.

Why emotion matters for couple photos and future baby images

Emotion transforms AI-generated photos from simple visual compositions into meaningful keepsakes that resonate personally. Couples increasingly turn to AI to create romantic, styled images reflecting their emotional bonds without coordinating professional photoshoots. AI couple photo generators merge portraits into romantic scenes using emotional styles for connection visualization, enabling partners to see themselves in dreamy settings, vintage aesthetics, or cinematic moments that capture their relationship’s unique character.

The emotional dimension matters because photos serve purposes beyond documentation. They celebrate connections, mark milestones, and create tangible representations of intangible feelings. When AI generates couple images with emotional intelligence, the results feel personal rather than generic. A photo styled with warm sunset tones and soft focus doesn’t just show two people together, it visually communicates intimacy and romance.

Future baby generators add another layer of emotional significance. AI future baby generators blend parental features to create realistic, emotionally meaningful images that help couples visualize their potential family. These aren’t scientific predictions but emotionally engaging interpretations that combine recognizable traits from both parents. The emotional impact comes from seeing familiar features merged into a new face, creating a sense of possibility and connection to an imagined future.

Practical applications extend across celebrations, announcements, and personalized memorabilia. Couples use emotionally styled AI photos for anniversary gifts, social media sharing, or home decor. The ability to specify emotional tones like joyful, tender, or playful allows customization that matches specific occasions or personal preferences. This flexibility makes AI photo generation valuable for creating exactly the emotional atmosphere you want.

Common emotional applications:

- Romantic anniversary keepsakes featuring couples in dreamlike or vintage settings

- Future baby visualizations for family planning discussions or pregnancy announcements

- Personalized gifts showing relationships in artistic or cinematic emotional styles

- Social media content expressing relationship milestones with visual storytelling

- Memory preservation creating idealized versions of special moments

Pro Tip: Match emotional styles to your relationship’s personality rather than following trends, selecting tones that genuinely reflect how you experience your connection together.

The emotional quality distinguishes memorable AI photos from forgettable ones. When generation systems understand and apply emotional nuance, they create images that feel authentic to your experience. This matters especially for intimate subjects like romantic partnerships and family visualization, where emotional resonance determines whether an image becomes cherished or ignored. Learn more about achieving emotionally authentic results at best ai photo results couples and babies and discover appeal of ai photography for couples.

Challenges and advances in nuanced emotion generation by AI

Artificial intelligence faces significant challenges accurately modeling the full spectrum of human emotion in generated images. EEmo-Bench shows MLLMs excel variably in image-evoked emotion assessment; nuance remains challenging, revealing that even advanced systems struggle with subtle emotional distinctions that humans recognize instantly. Evaluation benchmarks expose gaps between AI capabilities and human emotional perception, particularly for complex feelings that blend multiple states.

A concerning issue involves unintended biases in emotion generation. Generative AI exhibits bias toward negative emotions regardless of prompts, complicating emotional realism, meaning systems may produce images with sadder or more anxious qualities than intended. This happens because training data often contains more negative emotional examples, or because AI models develop statistical associations between certain visual patterns and negative feelings. For couple photos and baby images meant to convey joy and connection, this bias creates obvious problems.

Continuous emotion models offer solutions to some challenges. Rather than forcing feelings into discrete boxes, Valence-Arousal frameworks represent emotions along scales measuring positivity and energy levels. This approach captures nuance that categorical systems miss. A photo can be simultaneously calm and joyful, or excited and tender, combinations that discrete happy or sad labels cannot express. The technical advantage lies in giving AI more precise targets during generation.

| Aspect | Discrete emotion models | Continuous emotion models |

|---|---|---|

| Representation | Fixed categories like happy, sad, angry | Scales measuring valence and arousal levels |

| Nuance | Limited to predefined emotional states | Captures subtle variations and blended feelings |

| Common biases | May default to stereotypical expressions | Can drift toward negative valence unintentionally |

| Best for | Simple, clear emotional communication | Complex, authentic emotional portrayal |

Ensuring balanced emotion generation requires deliberate technical choices. Developers must curate training datasets representing positive emotions adequately, implement bias detection during generation, and provide users controls for adjusting emotional qualities. For couple and baby photos, authenticity depends on systems that can faithfully render warmth, tenderness, and joy without unintended negative undertones.

Technical challenges in emotion AI:

- Subjective interpretation makes ground truth difficult to establish for training

- Cultural variations in emotional expression complicate universal models

- Subtle facial and contextual cues require extremely high-resolution understanding

- Balancing emotional intensity with natural realism

The field continues advancing rapidly. Researchers develop better evaluation methods, more representative training data, and refined architectures that separate emotional control from other image qualities. For users, understanding these challenges helps set realistic expectations and choose tools carefully. Platforms investing in emotion modeling research typically produce more reliable, authentic results than those treating emotion as an afterthought. Explore ai-generated portrait best practices to navigate these technical considerations effectively.

How to use emotional AI photo generation tools effectively

Getting emotionally meaningful results from AI photo generators requires strategic approach and understanding of how these systems work. Following structured steps maximizes the chances your generated images capture the feelings you want to express.

Step 1: Select the right emotional style matching your desired mood. Most advanced platforms offer style presets like romantic, joyful, dreamy, or tender. These aren’t just visual filters but emotional frameworks that guide the entire generation process. Choose styles aligning with your specific purpose, whether creating anniversary keepsakes, future baby visualizations, or relationship celebration images.

Step 2: Use continuous emotion values if the tool allows for nuanced control. Some platforms let you adjust emotional qualities along scales rather than picking fixed categories. Experiment with valence settings to control positivity levels and arousal controls for energy or calmness. This granular control produces more personalized results than one-size-fits-all emotional presets.

Step 3: Adjust color cues consistent with known color-emotion associations. Warm tones like oranges and golds typically evoke comfort and intimacy. Cool blues suggest calmness or serenity. Soft pastels communicate tenderness. While AI handles much of this automatically, understanding these associations helps you evaluate and refine results.

Step 4: Blend facial features carefully for realistic and emotionally coherent images. For couple photos, ensure both faces receive equal emotional treatment. For baby generators, balanced feature blending from both parents creates more emotionally engaging results than heavily favoring one parent’s traits. The goal is harmony that feels natural.

Step 5: Preview and iterate to avoid common biases or negative emotion drift. Generate multiple variations, comparing emotional qualities across results. If images consistently feel sadder or more anxious than intended, adjust prompts or try different style settings. Iteration reveals patterns in how specific tools interpret emotional direction.

Step 6: Validate emotional impact through feedback when possible. Show generated images to your partner or trusted friends, asking what emotions the photos evoke. External perspectives catch emotional mismatches you might miss through familiarity with the generation process.

Pro Tip: Save your most successful emotional style combinations as templates for future projects, building a personal library of settings that consistently produce the emotional qualities you value.

Effective usage of emotion embedding and style prompt adjustment yields superior AI photo realism and emotional fidelity, transforming generic outputs into personally meaningful keepsakes. The technical sophistication of modern tools means small adjustments in emotional parameters can dramatically shift the final image’s impact. Treat emotion selection as seriously as you would lighting or composition choices in traditional photography.

Understanding your own emotional objectives before starting helps tremendously. Ask yourself what feeling you want viewers to experience when seeing the photo. Clarity about emotional goals guides every subsequent decision, from style selection through final refinement. For detailed techniques on achieving photorealistic quality alongside emotional authenticity, visit making ai photos look real.

Discover personalized AI photo keepsakes with PairFuse

Now that you understand how emotion shapes AI photo generation, you can apply these insights with tools designed specifically for couples and families. PairFuse offers specialized AI systems that merge your photos into romantic scenes or create future baby visualizations with exceptional emotional resonance and photorealistic quality. Unlike generic image generators, the platform focuses on preserving recognizable facial identity while embedding the emotional warmth that makes photos truly meaningful.

The platform leverages cutting-edge emotion modeling to create keepsakes reflecting your unique bonds. Whether you want dreamy couple portraits for an anniversary, cinematic relationship images for social sharing, or realistic baby visualizations exploring your future family, PairFuse delivers emotionally authentic results without expensive studio sessions or technical complexity. Advanced facial analysis ensures both partners appear naturally in couple photos, while baby generators blend parental features into plausible, emotionally engaging infant portraits.

Explore the ai couple photo maker for romantic imagery across dozens of styles, try the free ai baby generator to visualize your potential children, or visit the PairFuse main page to discover the full range of emotionally intelligent photo generation capabilities.

Pro Tip: Experiment with multiple emotional styles on PairFuse to find which best captures your relationship’s personality, then use those preferred settings for creating cohesive photo collections that tell your unique story.

Frequently asked questions

What is the role of emotion in AI photo generation?

Emotion integration determines whether AI-generated images feel authentic and meaningful or cold and mechanical. Modern systems embed emotional qualities through psychological models that map feelings to visual features like lighting, color, expression, and composition. For couple photos and baby visualizations, emotional intelligence creates the warmth and connection that transform generic images into cherished keepsakes. This matters especially for intimate subjects where emotional resonance defines the photo’s value.

How do continuous emotion models improve AI-generated photos?

Valence-Arousal models capture nuanced, continuous emotional states, outperforming discrete categories by representing feelings along scales rather than fixed boxes. This allows AI to generate photos expressing subtle emotional combinations like calm joy or tender excitement that simple happy or sad labels cannot capture. The technical advantage creates more faithful emotional expression, particularly important for couple and family photos requiring authentic, personalized feeling rather than generic emotional stereotypes.

Can AI-generated couple photos capture genuine emotional connection?

User studies and psychophysics indicate AI couple photos faithfully convey emotional connection via styles like romantic or dreamy, creating memorable images reflecting users’ bonds without traditional photoshoots. Advanced blending techniques and emotion style application simulate authentic relationship feelings visually through coordinated expression, body language, lighting, and atmospheric elements. While not replacing real photographs, emotionally intelligent AI generation produces keepsakes that resonate personally and communicate relationship qualities effectively.

Are there any biases in AI emotional image generation?

Generative AI tends to bias outputs toward negative emotions regardless of prompt, potentially introducing sadness or anxiety into images meant to convey joy and connection. This happens because training datasets often contain more negative examples or because statistical patterns in AI models associate certain visual features with negative feelings. Being aware helps users choose platforms investing in bias mitigation and refine prompts to counteract unintended emotional drift. Quality tools provide controls for adjusting emotional balance when defaults skew negative.

What are practical uses for emotionally aware AI photos?

Couples use emotionally styled AI photos for anniversary gifts, relationship milestone celebrations, social media sharing, and home decor that reflects their bond’s unique character. Future baby generators create visualizations for family planning discussions, pregnancy announcements, or simply exploring possibilities together. The personalized nature makes these images valuable for occasions requiring emotional resonance and individual meaning. Beyond personal use, emotionally intelligent AI photos serve creative projects, artistic expression, and storytelling where authentic feeling matters more than documentary accuracy.

How do I choose AI tools that handle emotion well?

Look for platforms mentioning emotion modeling, psychological frameworks, or continuous emotion scales in their technical descriptions, as these typically produce more nuanced results than basic generators. Check whether the tool offers emotional style presets and adjustment controls rather than single-option generation. Read user reviews focusing on emotional authenticity rather than just visual quality. Test multiple platforms with the same input photos, comparing how well each captures the feelings you want to express. Quality emotion-aware tools demonstrate consistent results across different emotional styles without unintended negative bias.